Fitness apps fuel AI training boom as Strava, Nike and Peloton data practices raise privacy concerns

A new cybersecurity study has highlighted the growing intersection between the global fitness boom and artificial intelligence, warning that popular workout apps are collecting extensive user data that may be used to train AI and machine learning systems. The findings raise renewed concerns over privacy, consent, and how personal fitness information is processed in the digital health ecosystem.

According to cybersecurity firm Surfshark, global search interest in “fitness” and “personal training” follows a clear seasonal pattern, with spikes every January and April. Since 2022, “fitness” reached its peak search interest of 100 in January 2026, while “personal training” surged from 37 in January 2025 to a peak of 100 in 2026, reflecting growing global engagement with digital fitness platforms.

The report also found a second wave of interest in “fitness” each April, averaging a 13% increase, as users prepare for summer fitness goals. “Personal training” trends follow a similar pattern, with sustained growth through the year and a secondary peak reaching 75 by August.

Fitness apps increasingly rely on AI-driven data collection

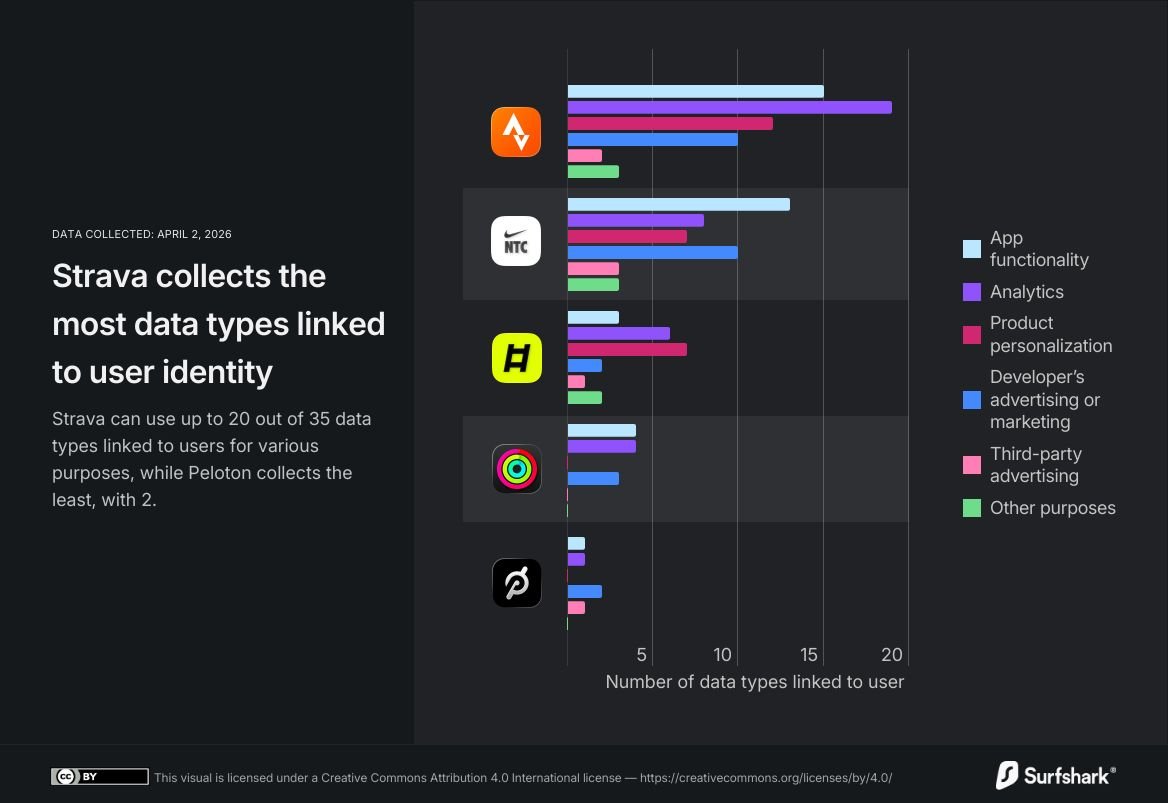

The study warns that artificial intelligence is becoming deeply embedded in fitness platforms, enabling personalized workout recommendations while also increasing the volume of personal data collected. Apps such as Strava, Nike Training Club, and Peloton are among those analyzed for their data practices and AI integration.

Strava is reported to collect 20 types of data linked to user identity out of 35 categories listed in the Apple App Store, including location data, search history, photos, videos, and user content. Nike Training Club collects 19 data types, while Peloton collects two.

Companies state that aggregated or de-identified data is often used where possible, but the report notes that personal data is still processed for AI and machine learning model development, service improvement, and product optimization. This includes training systems that enhance personalization and performance tracking features.

Luis Costa, Research Lead at Surfshark, warned that AI training on personal data without explicit, informed consent raises serious privacy risks. He said users may not fully understand how their information is processed when engaging with AI-powered fitness features.

Tracking practices and biometric data raise further concerns

Beyond AI training, the study highlights how fitness apps use collected data for advertising, analytics, and personalization. Some platforms also access biometric data when integrated with wearables or third-party services, significantly expanding the scope of user profiling.

Ladder, another app analyzed, uses multiple data types across personalization and analytics functions, including tracking and behavioral insights. The report suggests that while such practices improve user experience, they also increase the depth of data profiling across platforms.

Four out of five apps reviewed were found to engage in tracking, defined as linking user data across apps or services for targeted advertising or sharing with data brokers. Apple Fitness+ was identified as the only platform not engaged in tracking according to the report.

Costa added that fitness apps can create highly detailed personal profiles that users may not expect, and warned that such data can be exposed in breaches or potentially exploited by malicious actors or third parties.

As AI continues to expand across the fitness industry, the report underscores a growing tension between personalization and privacy, with increased calls for transparency and stronger consent frameworks in digital health technologies.